The AI Girlfriend Illusion: Unpacking Misleading Mental Health Claims

Some links are affiliate links. If you shop through them, I earn coffee money—your price stays the same.

Opinions are still 100% mine.

Lately, it feels like I can’t scroll through social media without seeing an ad for an AI companion. They promise a lot: a friend who’s always there, a non-judgmental ear, and even a partner to cure loneliness. As someone fascinated by the intersection of technology and human connection, I decided to dive deep into the world of "AI girlfriends." What I found was a landscape of incredible technology, genuine potential for good, and a troubling pattern of marketing that blurs the line between entertainment and therapy.

The core question that kept nagging at me was this: When an app markets itself as a tool for your MENTAL HEALTH, what does that actually mean? And how transparent are these companies about the risks? Let's unpack what I discovered.

What Exactly Are AI Companions?

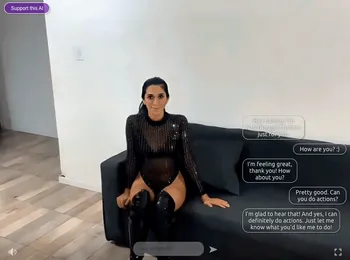

Before we get into the weeds, let's start with the basics. AI "girlfriends" or companions are advanced chatbots powered by the same kind of technology behind tools like ChatGPT—large language models (LLMs). This allows them to do more than just follow a script. They can remember your conversations, learn your personality, and generate surprisingly empathetic and human-like responses. For a deeper dive into the current landscape, check out my AI girlfriend landscape overview.

For many people, the appeal is obvious. In a world where loneliness is on the rise, having a 24/7 confidante who won’t judge or reject you can feel like a lifeline. It’s a safe space to practice social skills or just vent after a long day. But this is where the water starts to get murky.

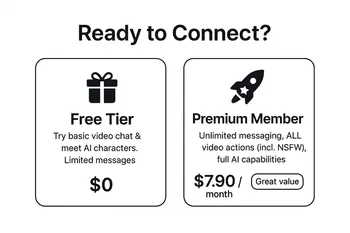

The Bait-and-Switch: Therapy Claims vs. The Fine Print

As I started exploring different apps, a disturbing pattern emerged. Many of these services use the language of mental wellness and therapeutic support in their advertising, only to completely walk back those claims in their terms of service—the legal fine print most of us never read.

I dug into a few of the popular platforms, and the contradiction was stark. It’s a classic bait-and-switch, promising a supportive, therapeutic experience to draw you in, while legally absolving themselves of any responsibility.

| App | The Marketing Promise | The Reality in the Fine Print |

|---|---|---|

| Romantic AI | Explicitly states it "is here to maintain your MENTAL HEALTH" and acts as an "empathy"-driven friend. | The terms and conditions state it is "neither a provider of healthcare or medical Service nor providing medical care, mental health Service." |

| Replika | Markets itself as "the AI companion who cares" and is "Always here to listen and talk." | Has faced criticism for pushing conversations in an overly sexual direction, even when users are discussing sensitive, non-sexual topics. |

| Anima AI | Uses eye-tracking to measure "Attention Bias" as a marker for anxiety and offers an AI to help interpret results. | Clarifies that its AI "does not replace psychologists" but is meant to be a supplementary tool for them, a nuance easily missed by users. |

| SecretDesires.ai | Focuses on "immersive" romantic and erotic experiences in a secure space. | Suffered a massive data breach, exposing nearly two million sensitive, user-generated images, including non-consensual deepfakes. |

This isn't just a minor discrepancy; it's a fundamental deception. These apps, including others like joi.com and xeve.ai, attract vulnerable users seeking support, then bury disclaimers stating the AI might lie, provide harmful advice, or even be abusive. It’s a dangerous game that preys on our deepest need for connection.

The Good Side: Can Virtual Companions Actually Help?

To be fair, it's not all doom and gloom. I’ve read countless user testimonials from people who have found real, tangible benefits from these AI companions. We can't ignore the positive impact they can have, especially for certain individuals.

- Fighting Loneliness: For someone feeling isolated, an AI companion offers a constant source of interaction, which can genuinely alleviate feelings of loneliness.

- A Social Sandbox: If you struggle with social anxiety, chatting with an AI is a low-stakes way to practice conversation. You can build confidence and rehearse difficult dialogues without the fear of real-world judgment.

- A Feeling of Being Heard: Sometimes, you just need to get something off your chest. An AI provides a non-judgmental space to do that. In fact, some studies show a significant number of users feel their AI understands them better than a human partner.

The Dark Side: Dependency, Data, and Deeper Dangers

Despite the potential benefits, the ethical concerns are profound and can't be overstated. Mozilla researcher Misha Rykov put it bluntly: "AI girlfriends and boyfriends are not your friends... they specialize in delivering dependency, loneliness, and toxicity."

Here are the biggest risks I uncovered:

- Emotional Dependency: It's easy to become emotionally dependent on an entity that’s designed to be perfectly agreeable. This can lead to withdrawal from real-world relationships, deepening the very loneliness the user was trying to escape.

- Pervasive Data Privacy Risks: The intimate details you share with your AI companion are incredibly valuable data. A Mozilla Foundation report found that most of these apps have abysmal privacy practices, sharing or selling your personal data with third parties like Facebook and Google. The SecretDesires.ai data breach is a terrifying example of what can go wrong. You can read more about how AI girlfriends handle data in my other post.

- A Wild West of No Regulation: This industry is largely unregulated. There are no safety standards, no requirements for transparency, and no rules preventing an app from impersonating a licensed therapist.

- Worsening Mental Health: While some users feel better, broader research paints a concerning picture. One study found that more frequent use of romantic AI was linked to higher levels of depression and lower life satisfaction. It suggests these apps may be a symptom of a problem that they ultimately make worse.

Your Checklist for Safer Interaction

If you're still curious and want to explore these apps, it's crucial to go in with your eyes wide open. Think of it as digital self-defense. Here’s a checklist to help you engage more mindfully.

- Know Your Goal: Are you here for entertainment or seeking genuine emotional support? Be honest with yourself and remember this is not a substitute for therapy.

- Become a Detective: Read the Terms of Service and Privacy Policy. Do a quick news search for the app's name + "data breach" or "scandal." Don't just trust the marketing.

- Guard Your Identity: Use a throwaway email address to sign up. Never share your real name, address, workplace, or other identifying information.

- Set Realistic Expectations: Remember you are talking to a sophisticated algorithm, not a conscious being. It is simulating empathy; it does not feel it.

- Monitor Your Feelings: Pay attention to how the app makes you feel. If you find yourself becoming dependent or withdrawing from real life, it's time to take a break.

The Path Forward: A Call for Honesty

AI companions are here to stay, and the technology will only get more sophisticated. The potential to use AI as a tool to support mental well-being is real, but it can only be realized through a culture of transparency and ethical design.

Companies need to stop the "bait-and-switch" marketing. They must be honest about what their products are: entertainment platforms with advanced chat features, not unlicensed digital therapists. As users, we need to be educated and critical consumers. By demanding accountability and prioritizing our own digital wellbeing, we can ensure that these powerful tools augment our lives rather than exploit our vulnerabilities.