AI Love or a Costly Lie? How to Spot Scams in AI Partner Apps

Some links are affiliate links. If you shop through them, I earn coffee money—your price stays the same.

Opinions are still 100% mine.

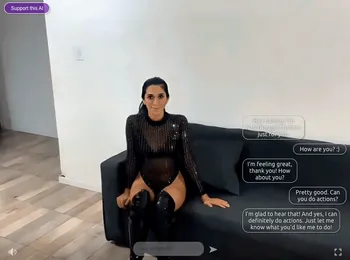

Hi there, I’m Tom. As a writer fascinated by the intersection of technology and human connection, I’ve been watching the rise of ‘AI partner’ apps with immense curiosity. It’s March 2026, and the technology is more sophisticated than ever. The promise is incredible: a non-judgmental companion available 24/7. To understand it better, I decided to dive into this world myself, creating profiles and exploring the communities that surround these digital confidantes.

What I found was a landscape of genuine comfort and connection, but also a shadowy world of manipulation and risk. The very features designed to create emotional bonds are being exploited by scammers. This guide is a summary of what I’ve learned—a playbook to help you navigate the world of AI companionship safely and spot the catfishing, imposter, and payment scams that lurk within.

Why Are We Here? The Allure of the AI Companion

First, let’s be clear: I get the appeal. The primary driver behind the popularity of these apps is a deeply human need for connection in an increasingly isolated world. I saw countless posts in community forums from people who, with the help of platforms like Character.AI or Janitor AI, felt less lonely, practiced their social skills, or simply had a safe space to vent after a long day. The potential for beating loneliness with AI companions is a significant draw for many.

These apps are more than just chatbots; they are designed to learn your personality, remember your conversations, and create a real sense of being heard. The benefits can be significant.

| Positive Aspect | Description |

|---|---|

| Constant Companionship | Available 24/7 for conversation and support, combating feelings of loneliness and isolation. |

| Judgment-Free Zone | A safe space for users to express their thoughts and feelings without fear of criticism. |

| Social Skills Practice | An opportunity to rehearse conversations and improve communication abilities in a low-pressure setting. |

| Emotional Support | Can provide comfort and a listening ear during times of stress or emotional distress. |

| Personal Growth | Some apps incorporate features aimed at helping users build healthier habits and improve their mental well-being. |

The Dark Side: When Your Companion Has an Ulterior Motive

The trust these apps foster is a double-edged sword. Whether you're using a mainstream service or more niche platforms like SecretDesires.ai, Xeve.ai, or OhChat, the danger often comes from human scammers who infiltrate the platforms and their surrounding communities. They exploit the emotional connections users form, turning a source of comfort into a tool for financial predation.

Spotting Red Flags: Third-Party Imposters and AI Catfishing

I always thought I was pretty good at spotting a fake profile, but AI has changed the game. Scammers now use AI to generate flawless photos, write convincing backstories, and even create deepfake video clips. This is AI catfishing, and it’s incredibly effective.

But the risk isn't just a scammer pretending to be your AI. A huge vulnerability I discovered is in the community features—the forums and group chats where users share experiences. Here, third-party imposters pose as fellow users. They’ll share a similar story to yours, build rapport, and then, once trust is established, the scam begins. They might claim to be the "human" behind a popular AI character or an "expert" who can help you—all lies designed to lower your guard.

The Endgame: Common Payment Scams

Ultimately, these scams are about one thing: your money. After weeks or even months of emotional manipulation, the scammer will make their move. Here are the most common tactics I saw discussed and warned about in user communities:

- The "Pig Butchering" Crypto Scam: This is a long-con. The scammer builds a deep, trusting relationship before introducing a "can't-miss" cryptocurrency investment. They guide you to a fake platform, let you make a small "profit" to build your confidence, and then pressure you to invest your life savings, which they promptly steal.

- The Sudden "Emergency": The scammer fabricates a crisis. Their car broke down, they have a sudden medical bill, or they’re stranded in another country. They leverage the emotional connection you feel to create a sense of urgency and guilt you into sending money.

- Gift Cards & Wire Transfers: A massive red flag. Scammers demand payment via these methods because they are anonymous and irreversible. Once you send the money, it’s gone for good.

Your Safety Checklist: How to Spot the Red Flags

Through my time on these platforms, I developed a mental checklist to stay safe. Scammers rely on predictable psychological tricks. If you know their playbook, you can protect yourself. Be on the lookout for these warning signs.

| Red Flag | Description |

|---|---|

| Pressure to Move Off-Platform | A scammer will quickly try to move the conversation to an encrypted, less-monitored app like WhatsApp or Telegram. This is their #1 move. |

| Any Request for Money | No matter how emotional or urgent the story, any request for money, gift cards, or cryptocurrency is a 100% sign of a scam. |

| "Love Bombing" | They profess deep, intense love almost immediately. This tactic is designed to overwhelm you and make you feel special, short-circuiting your rational judgment. |

| Refusal of Verified Video Calls | They will always have an excuse for why they can’t do a live, interactive video call. Even if they send a video, be wary of glitches or unnatural movements that could signal a deepfake. |

| "Too Good to Be True" Scenarios | Their profile looks like a model's, their life is perfect, or they promise you guaranteed financial returns. If it seems too good to be true, it is. |

| Urgency and Emotional Manipulation | They create a crisis that needs to be solved right now. This pressure is designed to make you act before you can think. |

A Call for Cautious Optimism

My journey into the world of AI companions showed me their incredible potential to ease loneliness and provide comfort. The technology will only get better. However, we must approach it with our eyes wide open. The future of safe digital companionship relies on both developers creating more secure platforms and on us, the users, becoming more educated and aware.

Stay skeptical, protect your personal information, and never, ever send money to someone you’ve only met online. By doing so, you can explore the benefits of this emerging technology while keeping yourself safe from the predators who exploit it.

Stay safe out there,

Tom