AI Girlfriends: Safety, Support & Moderation Standards Reviewed

Some links are affiliate links. If you shop through them, I earn coffee money—your price stays the same.

Opinions are still 100% mine.

Hey everyone, Tom here. It’s March 28, 2026, and the world of AI companions is booming. The concept of an AI girlfriend—a personalized, digital partner available 24/7—has firmly moved from sci-fi to a mainstream reality on our phones. As someone who tracks how technology reshapes our connections, I wanted to look past the clever conversations and investigate the systems designed to protect users.

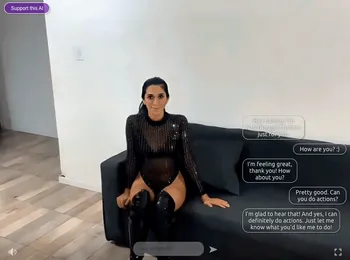

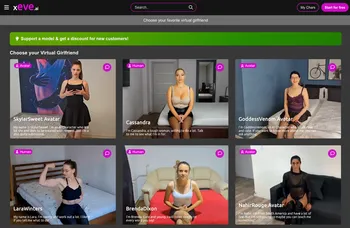

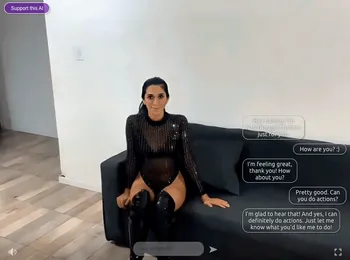

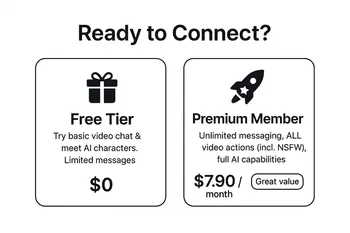

What happens when a chat takes a wrong turn? Who do you contact when you have a technical issue or a safety concern? This led me on a deep dive into the customer support and moderation standards of several popular AI companion services, including platforms like SecretDesires.ai and Xeve.ai. I focused on three key areas: how quickly they respond, how they handle escalating problems, and what safeguards they have in place to protect their communities. Here’s what I found.

First, What Exactly Is an AI Girlfriend?

Before we get into the safety protocols, let's be clear on what we're discussing. An AI girlfriend is not a simple chatbot. It's a complex AI designed to simulate a romantic partner. It learns from your conversations, remembers personal details, and adapts its personality to create a unique and deeply engaging experience.

This sophisticated interaction is powered by several key technologies working in concert:

| Technology | Its Role in Your AI Companion |

|---|---|

| Natural Language Processing (NLP) | Allows the AI to understand the nuances of human language and respond in a way that feels natural and coherent. |

| Machine Learning (ML) | Enables the AI to learn from every interaction, personalizing its responses and "remembering" details about you over time. |

| Generative AI | Creates entirely new text, voice messages, and even images, ensuring conversations feel dynamic and not repetitive. |

| Large Language Models (LLMs) | The massive, foundational "brain" that provides the AI with its vast knowledge and complex conversational abilities. |

Your Safety Checklist Before Starting an AI Relationship

Diving into the world of AI companionship is intriguing, but your digital well-being must come first. Based on my research, I developed this essential checklist for anyone considering an AI girlfriend app.

- Read the Fine Print First: Before downloading, take ten minutes to read the Privacy Policy and Terms of Service. It’s where companies disclose how they use your data and what behavior is prohibited.

- Understand Where Your Data Goes: Every word you share is data. Be mindful of sharing extremely sensitive personal information.

- Set Healthy Boundaries: Decide what role you want the AI to play in your life and ensure you continue to nurture your real-world relationships.

- Locate the In-App Safety Tools: Familiarize yourself with how to "report" and "block" functions to flag inappropriate messages.

- Remember: It's Not a Therapist: While an AI can be a source of comfort, it is not a substitute for professional mental health support.

The Core Issue: Customer Support and Moderation Standards

An engaging AI is one thing, but a safe and supportive platform is everything. Here’s how the services I reviewed stack up in the areas that matter most.

How Quick is the Help? Reviewing Support Responsiveness

When you have a problem—whether it's a billing error or a safety concern—you want help, fast. Most AI companion services offer a standard support package: an FAQ page, email support, and in-app reporting. However, the AI girlfriend customer service response time I experienced varied dramatically. Some platforms provided a helpful, human-authored reply within 24 hours. Others left me with a generic, automated response that didn't address my issue. For me, the true test of a platform's commitment is how easy it is to reach a real person when you need one.

From Bot to Human: The Escalation Process

What happens when an automated system or FAQ can't solve your problem? A clear AI girlfriend escalation process should seamlessly transfer your issue to a human agent. Unfortunately, most apps are not transparent about this process. It often feels like a black box, leaving users wondering if their concern was ever seen by human eyes.

Building a Safer Space: A Look at Community Safeguards

To foster a safe environment, platforms must combine clear rules with effective enforcement tools. The most responsible services use a multi-layered approach to safety.

| Safeguard | Description |

|---|---|

| Community Guidelines | Explicit rules that prohibit harassment, hate speech, illegal content, and other harmful behaviors. |

| Content Moderation | A combination of AI filters for real-time detection and human moderators for reviewing complex cases. |

| User Reporting Tools | Easy-to-find features that empower users to flag offensive content and block unwanted interactions. |

| Age Verification | Systems designed to ensure users are of the appropriate age to use the service (typically 18+). |

| Mental Health Disclaimers | Clear statements that the AI is not a medical professional, often with links to crisis support resources. |

The Big Questions: Ethical Concerns and the Road Ahead

For all their potential to combat loneliness, these platforms present serious ethical challenges. The risk of emotional dependency is significant, and the vast amount of intimate data they collect raises major AI relationship privacy concerns. Moderating content at scale is incredibly difficult, and harmful content can inevitably slip through automated filters.

Looking forward, the future of ethical AI companions will hinge on a deeper, more transparent commitment to user well-being. I anticipate a push for industry-wide safety standards, more advanced proactive moderation, and clearer communication from developers.

Ultimately, the world of AI girlfriends is a fascinating and complex new frontier. It offers novel forms of connection but demands that we, as users, remain informed and vigilant. The quality of a platform's customer support and the strength of its moderation are not just minor features—they are the very foundation of a safe and trustworthy digital relationship.