AI Girlfriends and US Law: A 2026 Guide to Privacy & Rules

Some links are affiliate links. If you shop through them, I earn coffee money—your price stays the same.

Opinions are still 100% mine.

I’ve spent the last few months on a fascinating journey, not through a new country, but through a new kind of relationship—the one people are forming with AI companions. What started as professional curiosity about "AI girlfriends" quickly became a deep dive into the technology, the human connection it fosters, and the complex legal web now being woven around it.

These aren't just simple chatbots anymore. They learn, they remember, they offer support. And as I explored, I realized that while we're focused on the emotional connection, a crucial conversation is happening in the background: the one about our rights, our data, and the responsibilities of the companies creating these digital partners.

So, let's pull back the curtain. Whether you're a curious user or a developer in this space, this is what you need to know about the U.S. laws shaping the future of AI companionship.

The Rise of Digital Connection and the Need for Rules

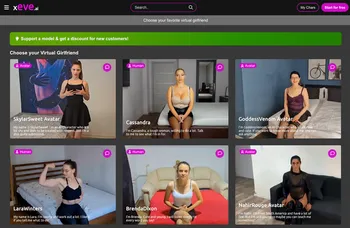

Before we get into the legal jargon, it's important to understand why regulators are suddenly paying so much attention. AI companions from platforms like golove.ai, couple.me, Character.AI, Paradot, and CrushOn.AI offer some incredible benefits. I’ve read countless stories, and the data suggests they are genuinely helping people combat loneliness, practice social skills in a judgment-free zone, and find a constant source of support. It’s a modern solution to an age-old human need for connection.

But with any new, powerful technology that touches our lives so intimately, there are challenges. The conversations we have with an AI girlfriend can be deeply personal. We might share our hopes, our fears, our insecurities—information that is incredibly sensitive. This raises critical questions:

- Where does that data go?

- How is it being used?

- Could it be used to manipulate us?

- And what happens when these platforms are used by minors?

These are the very questions that have prompted government bodies to step in.

The Federal Watchdog Takes Notice: The FTC's Inquiry

The big headline in this space came back in September 2025, when the Federal Trade Commission (FTC)—the nation's top consumer protection agency—launched a major inquiry into seven of the biggest AI companion companies. When I first read the announcement, it was clear this wasn't just a routine check-in. The FTC is taking a hard look at the engine room of this industry.

Their investigation is focused on understanding how these companies:

- Handle our data: How is personal and sensitive information collected, used, and protected? I've written a more detailed overview of AI girlfriend data practices in another post.

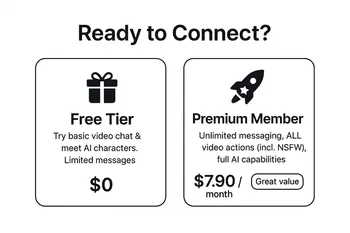

- Monetize interactions: Are they using our conversations to sell us things or for targeted advertising?

- Design their AI: How are the AI's "personalities" built and tested for manipulative behaviors?

- Protect younger users: What safeguards are in place to prevent harm to children and teens?

This inquiry is a massive signal. It tells providers that the "move fast and break things" era is over. The government is demanding accountability and transparency, which is ultimately a good thing for all of us as users.

A Patchwork of Protection: How State Privacy Laws Apply

While the FTC operates at the federal level, much of the action on data privacy is happening in the states. Without a single, overarching federal privacy law, a "patchwork" of state-level acts has emerged, and they absolutely apply to AI companion apps.

I’ve spent a lot of time sifting through these, and while they differ in the details, they share a common goal: giving you more control over your personal information.

| State Law | What It Means for Your AI Companion Data |

|---|---|

| California (CCPA/CPRA) | You have the right to know what data an app collects on you, the right to have it deleted, and the right to stop them from selling or sharing it. |

| Virginia (VCDPA) | Companies need your explicit "opt-in" consent before they can process sensitive data (which many AI conversations would qualify as). |

| Colorado (CPA) | You can opt-out of your data being used for targeted ads or "profiling" that could have a significant effect on you. |

These laws mean that AI relationship providers can't just collect our data without clear rules. They have a legal obligation to be transparent and respect our choices.

Even more pointedly, states like California and New York have passed landmark laws aimed directly at AI companions. California's SB 243, which went into effect this year, and New York's AI Companion Models Law from late 2025, both mandate two critical things:

- Clear Disclosure: The app must tell users (especially minors) that they are talking to an AI, not a person.

- Crisis Protocols: The AI must be designed to detect and respond appropriately when a user expresses thoughts of self-harm, often by providing resources for help.

Overview of Regulatory Pressures and Compliance Expectations

If I were building an AI companion app today, navigating this legal landscape would be my top priority. The regulatory pressures are real, and getting it wrong could mean hefty fines and a total loss of user trust. For any provider in this space, here’s a checklist of what’s expected.

Be Radically Transparent

- AI Disclosure: Clearly and repeatedly disclose that the user is interacting with an AI. No gray areas.

- Plain-Language Policies: Write your privacy policy in simple terms. Explain what data you collect, why you collect it, and how you use it.

Prioritize Privacy & Security

- Privacy by Design: Build your app with privacy as a core feature, not an afterthought.

- State Law Compliance: Comply with all relevant state laws (CCPA, VCDPA, etc.). This means providing easy ways for users to access, delete, and control their data.

- Security Audits: Conduct regular security audits to protect sensitive user conversations from breaches.

Protect Minors

- Robust Age-Gating: Implement strong age verification. A simple "Are you over 18?" checkbox is no longer sufficient.

- COPPA Compliance: Strictly comply with the Children's Online Privacy Protection Act (COPPA) for any users under 13.

- Age-Appropriate Design: Ensure content and interactions are appropriate for all potential age groups accessing the service.

Design for User Well-being

- Crisis Response: Develop and implement a crisis response protocol for users in distress, as mandated by laws in CA and NY.

- Ethical Design: Avoid designing manipulative features that prey on emotional vulnerability for profit (e.g., creating artificial distress to drive purchases).

- Promote Healthy Use: Focus on creating a supportive experience that encourages, rather than replaces, real-world connection.

Frequently Asked Questions about AI Girlfriend Laws

What are the main U.S. laws for AI girlfriends? ▲

What are the privacy risks of AI girlfriends? ▼

Why is the government investigating AI chatbot companies? ▼

How can I ensure my data is safe with an AI companion? ▼

The Future is a Partnership

The world of AI companions is a new frontier in the human-technology relationship. The legal landscape is just now catching up to the innovation. As a user, being informed is your best tool. As a provider, ethical design and transparent compliance are the only paths forward.

This technology has the potential to bring a lot of good into the world, but it requires a partnership—between the people who build it, the regulators who oversee it, and all of us who use it—to ensure our digital hearts are in safe hands.

- Tom